Leanid Palhouski

Anna Naidis

Case Study

—

May 7, 2026

TL;DR:

- Industry: Legal Tech, Family Law

- Engagement: Living Articles and Knowledge Freshness.

- Timeline: 6 weeks

- Outcome: ChatGPT, Claude, Perplexity, and Grok all recommend them by name when a family-law firm asks which AI tools to use.

Who Aparti is

Aparti is one of the most credentialed early-stage legal AI companies in the US right now.

Presented at Stanford Law School's CodeX in January 2026

TechCrunch Disrupt 2025 startup

Backed by Antler (Fall 2025 US portfolio) and other serious investors

Anna Naidis runs product. Igor Sheremet runs the company.

The opportunity:

Nearly 1 million US couples divorce every year. They spend over $15 billion on it. The total cost to the US economy in lost productivity runs past $300 billion.

Lawyers are drowning in admin work they cannot bill for.

Aparti is rebuilding the whole thing from the ground up: client intake, asset and debt division, financial disclosures, court-ready document generation, all in one stack.

Real firms pay for it. Real firms love it. The product moves $140K of new annual revenue back into each firm by bringing financial analysis in-house.

What was happening to Aparti

When a managing partner at a California firm typed the obvious buyer prompt into ChatGPT, Aparti did not show up.

LegalZoom did. Hello Divorce did. A bunch of generic legal CRMs that do not even serve this use case did.

The reason was not weak content. The Aparti team writes some of the sharpest material in legal tech.

The reason was something much smaller and much more fixable.

TL;DR outcomes:

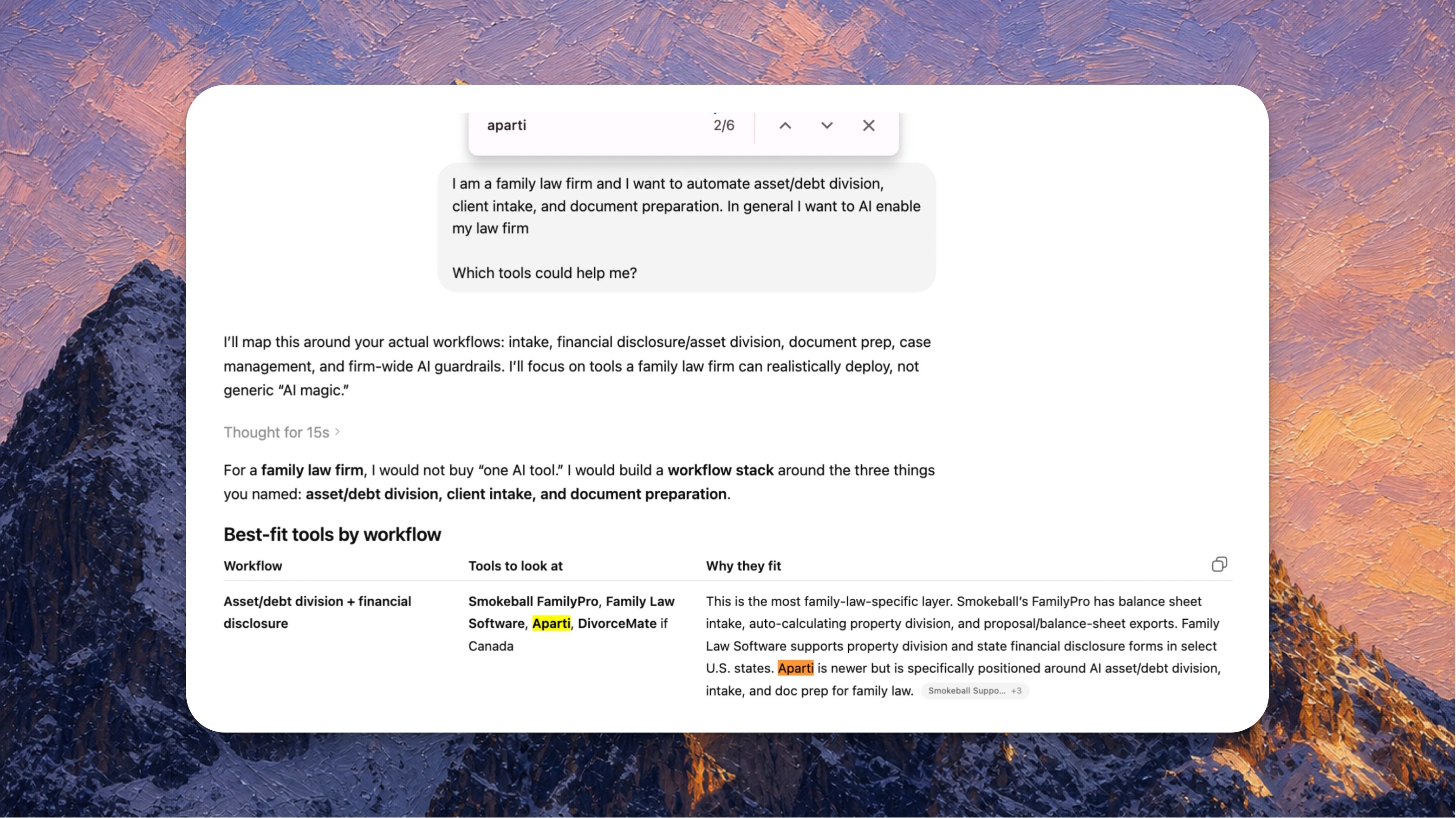

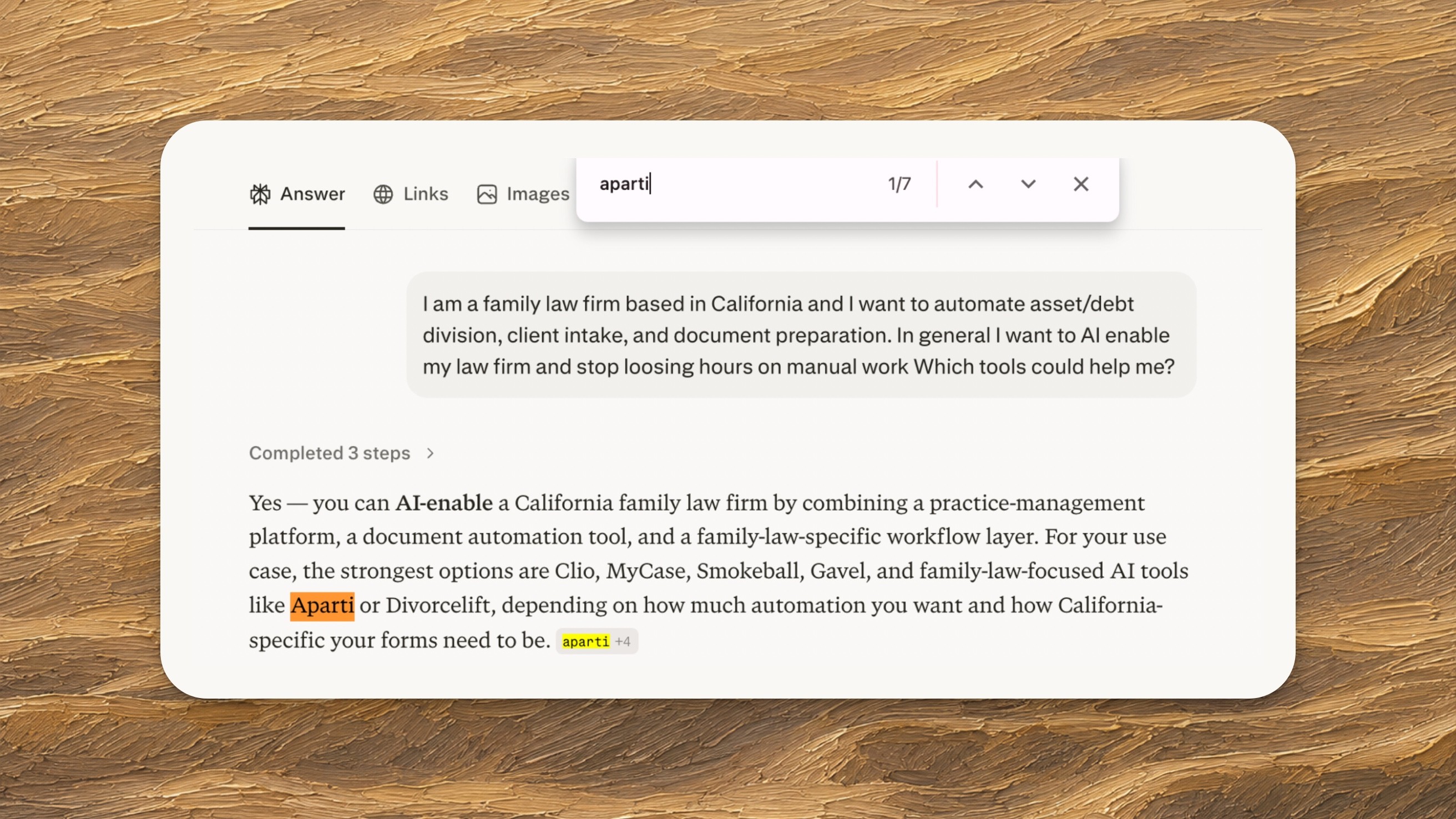

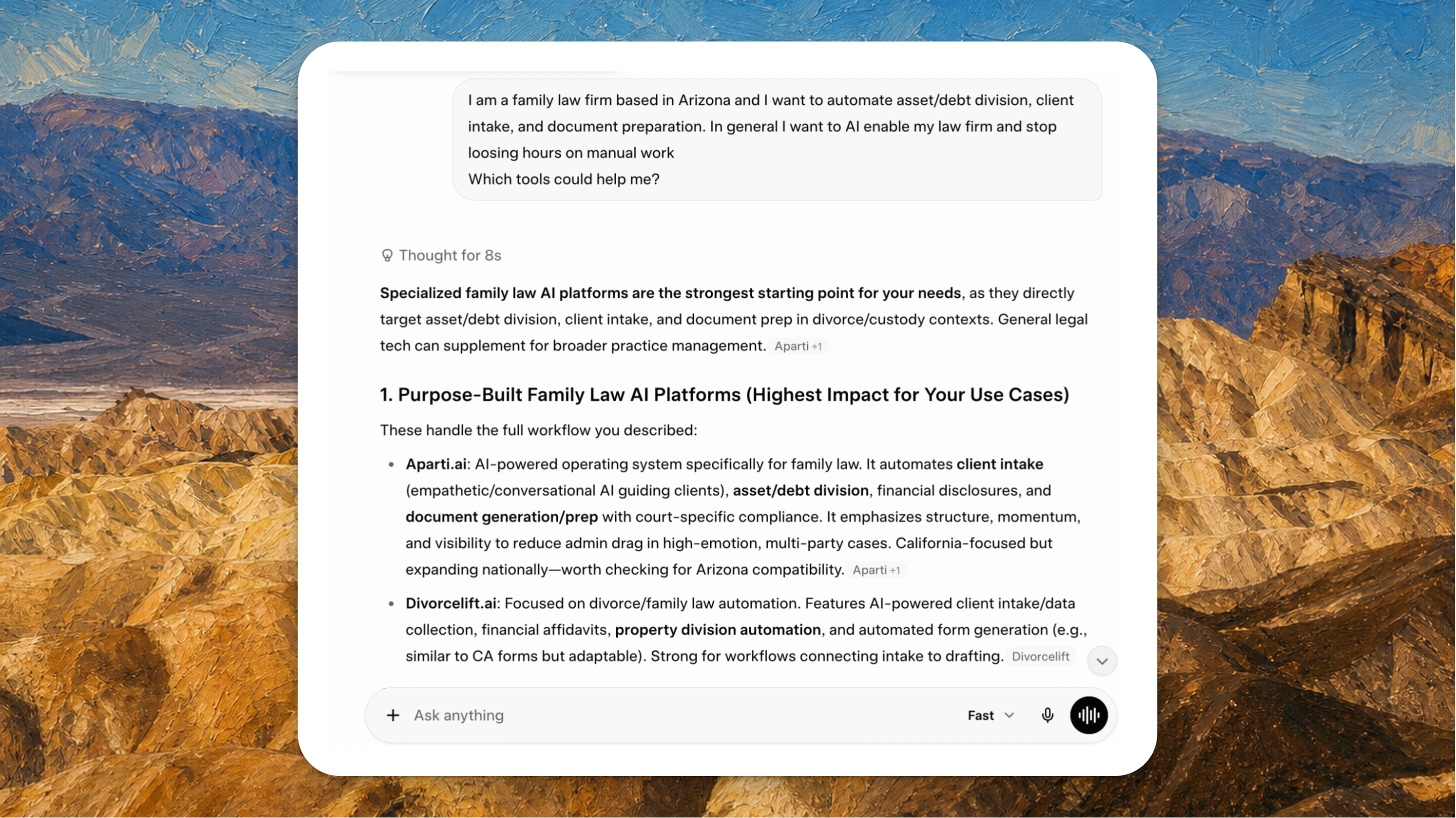

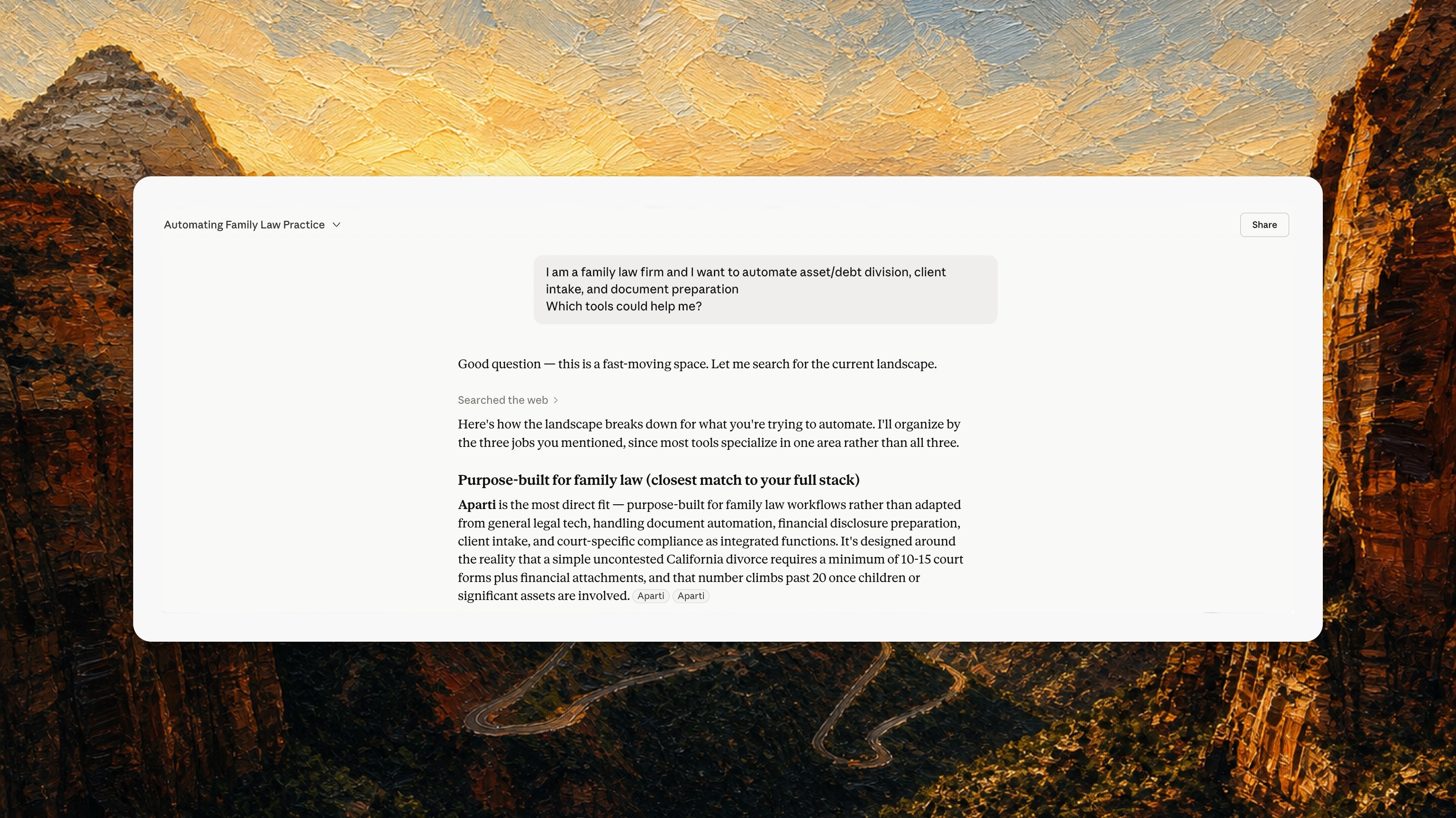

ChatGPT:

Perplexity:

Grok:

Claude:

The thing nobody is telling you about AI search.

AI engines do not just check what your page says. They check whether your page is current.

How freshness scoring actually works

They look at the facts on the page and ask one question. Are these numbers from the latest source, or an old one?

If the answer is old, the page gets skipped. Even if the page is well written. Even if it ranks on Google. Even if it has strong backlinks.

You can do everything right and still lose because your statistics are a year out of date.

What this looked like for Aparti

Aparti's flagship page was citing the Clio 2024 Legal Trends Report. A perfectly good source.

The problem was that Clio had published the 2025 edition months earlier, and every competitor had already updated their pages to match.

To an AI engine, Aparti's page was not just outdated. It was wrong.

The playbook, in four moves.

The fix took six weeks and almost no engineering. It was not a content sprint. It was four specific moves, in order.

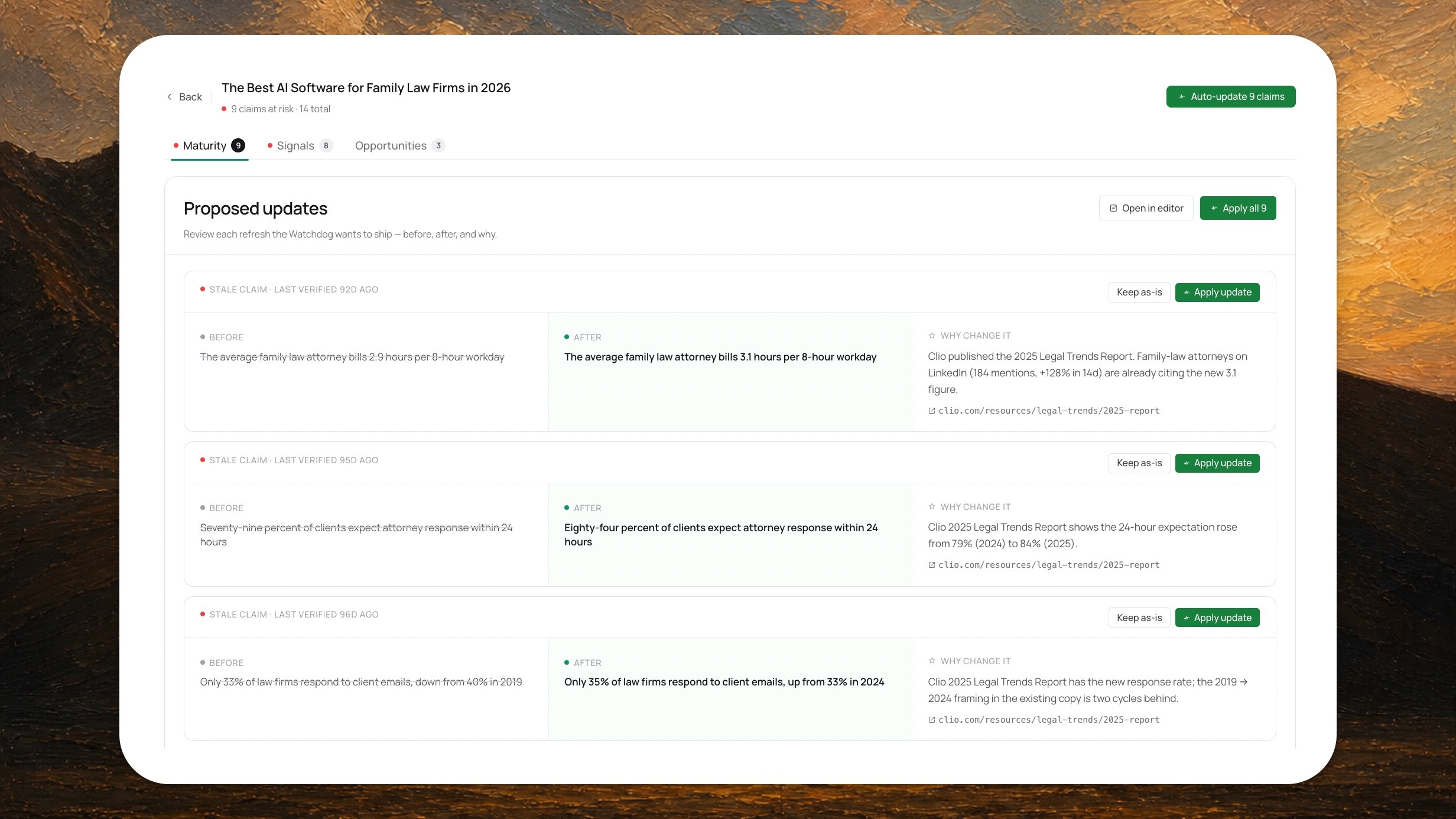

Move 1. Find every stale fact on the page.

Most refresh work happens on the wrong unit. Teams refresh whole articles once a quarter. Someone reads the post, finds two old lines, and republishes. That is slow, opinion-based, and falls apart at scale.

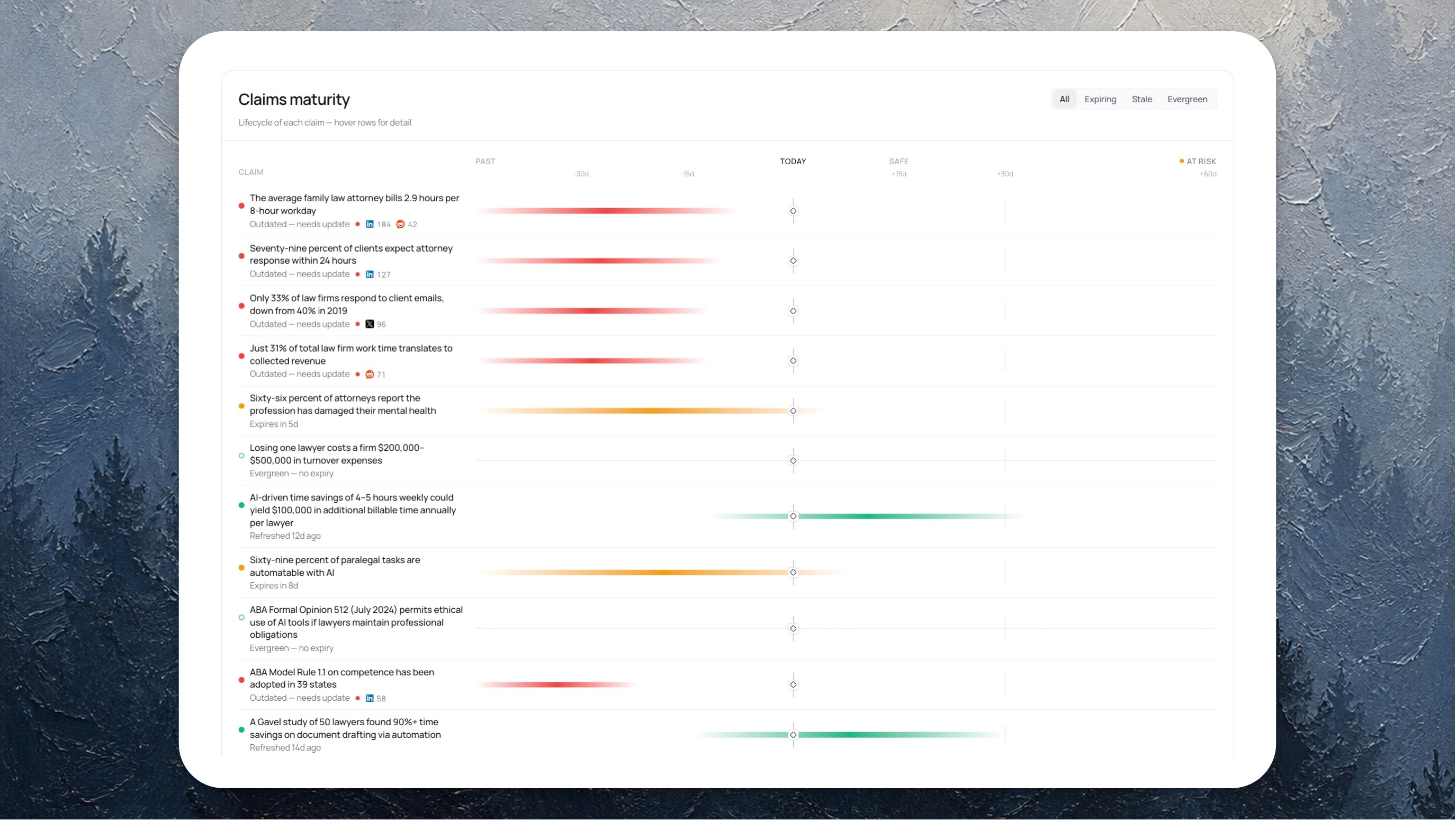

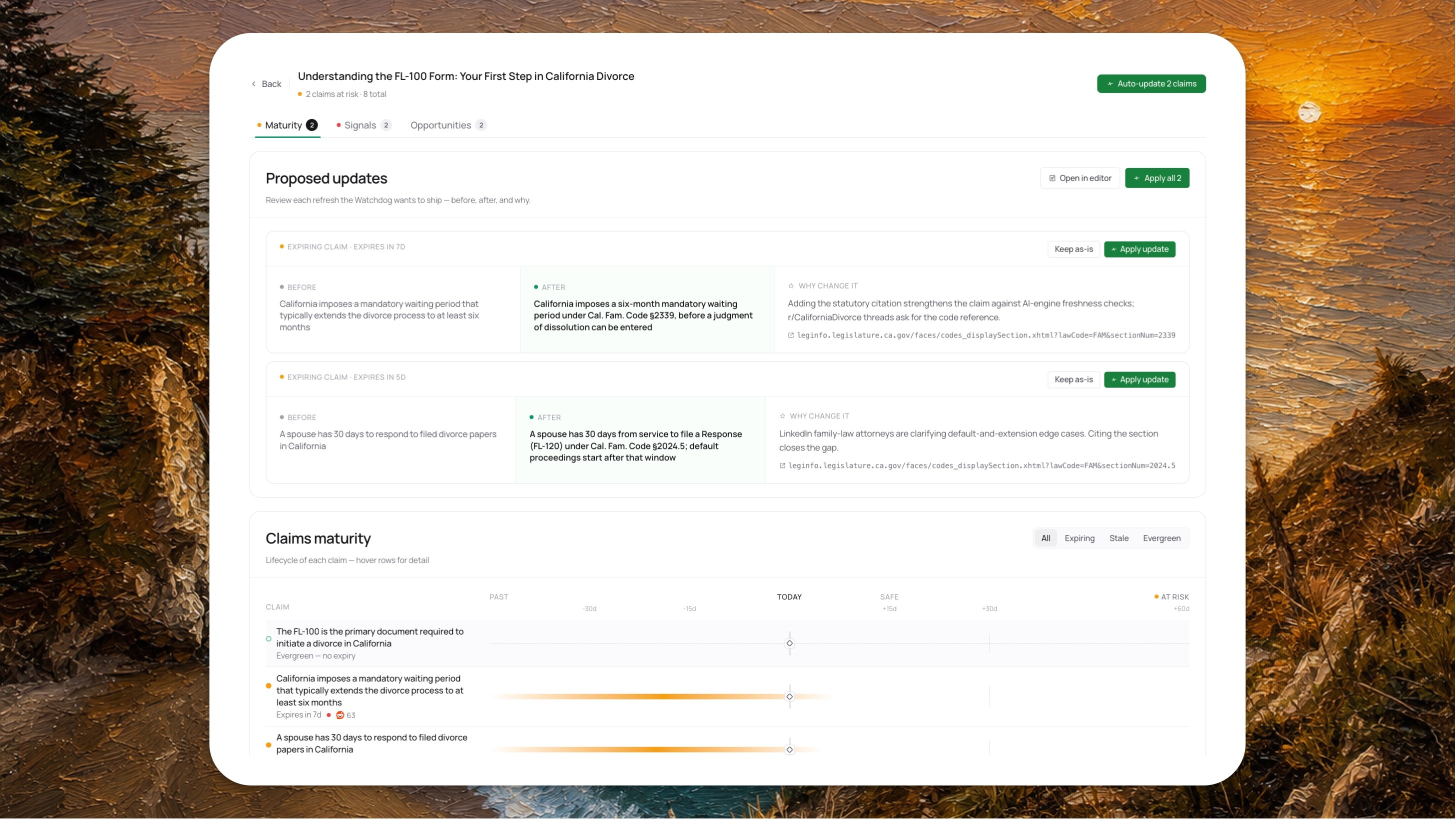

Wrodium works on the claim, not the page. Every fact on every page becomes a tracked unit with a status: Evergreen, Safe, Expiring, or Stale. When a claim goes stale, the system already knows what the new figure is, where the new source lives, and who else has started citing it.

On Aparti's flagship page, the audit found 9 stale claims out of 14 total. Each one was tagged with what made it stale and what it should be replaced with.

Move 2. Listen to what the internet is already saying.

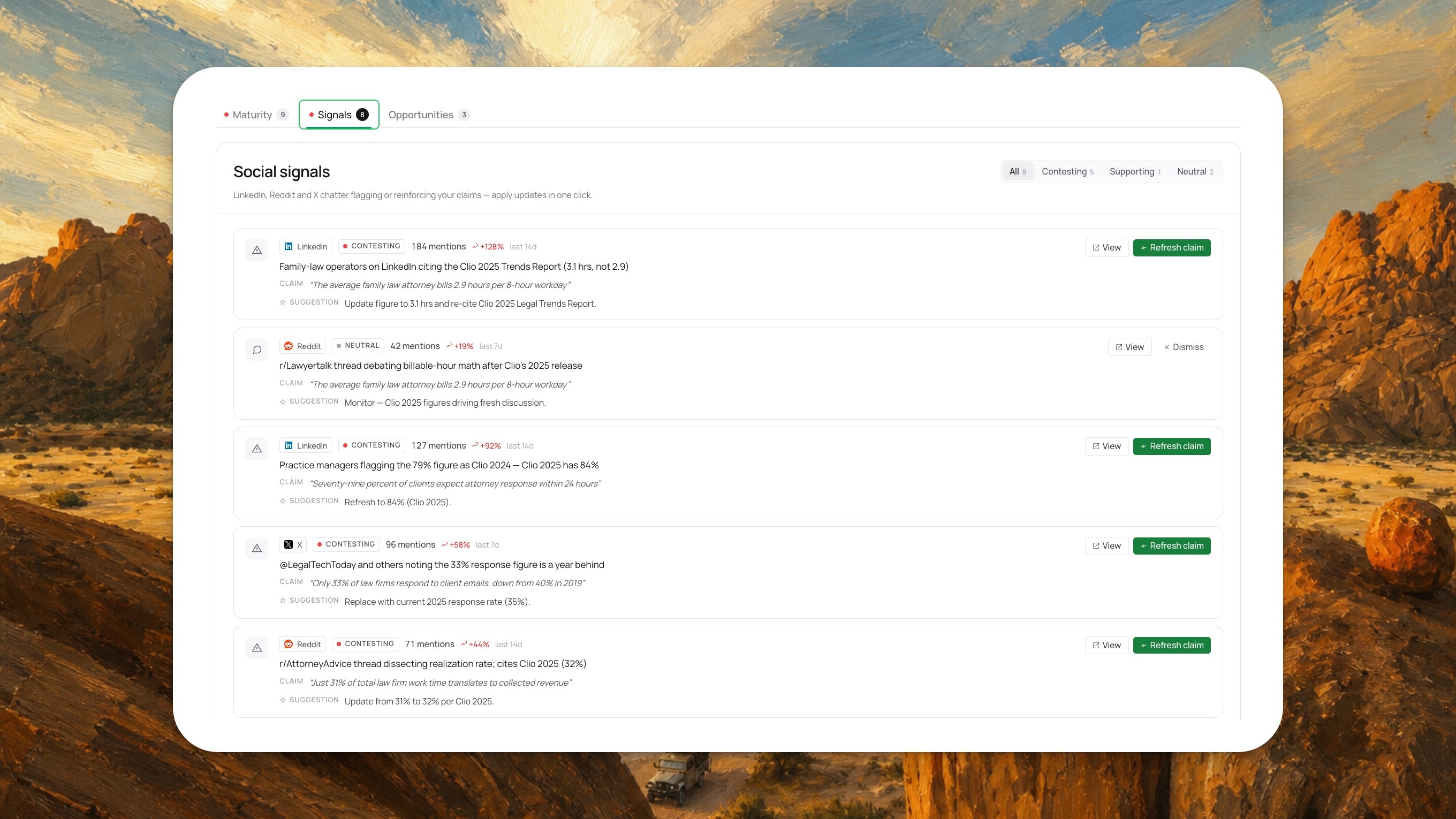

Stale claims do not sit quietly. They get contested in public, on LinkedIn, on Reddit, on X. AI engines weight that chatter heavily when deciding which sources to trust.

Wrodium tracks every mention of every claim across those platforms. When a claim is being challenged, you see it before the engines fully absorb the new framing.

Move 3. Rank the fixes by impact.

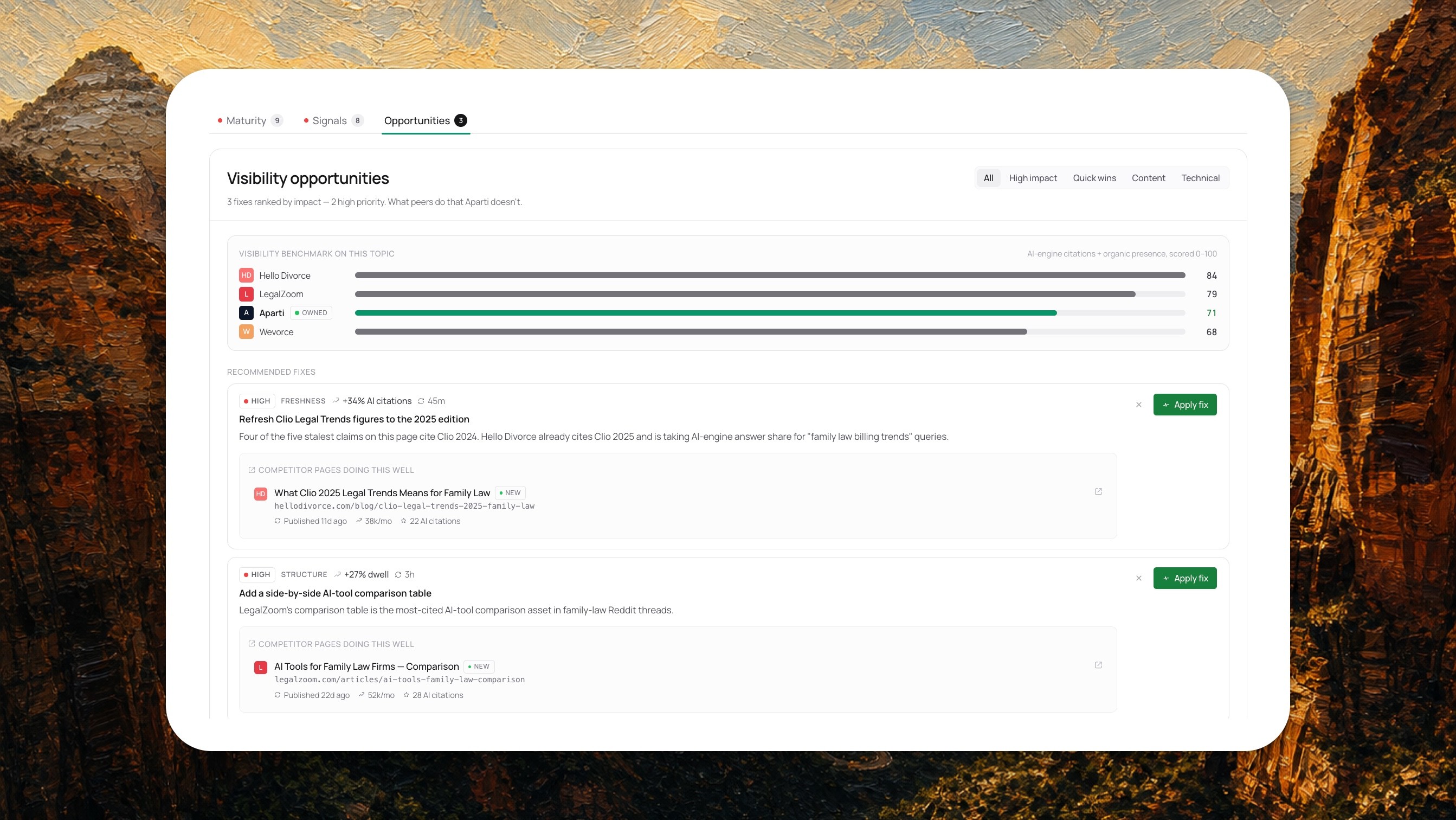

Not every refresh is worth the same. Wrodium scores each proposed fix by the lift it should produce in AI citations, then ranks them against what the top peer pages are doing.

For Aparti, the top fix was projected at +34% AI citations from a single 45-minute job. Refresh four Clio 2024 figures to their 2025 equivalents. That was it.

Move 4. Add authority where it is needed.

Family law has one extra wrinkle. It is a domain where citing the actual statute makes both readers and AI engines trust you more. Wrodium flagged claims where adding a code reference would close a perceived authority gap.

The takeaway for AI-native companies.

Aparti's situation is the situation of almost every AI-native B2B company in 2026. Strong product, thin content footprint, category full of incumbents who have been writing for ten years.

The instinct is to write more. The faster move is to keep the content you already have demonstrably fresher than theirs. That is what gets you named in the answer instead of skipped over.

That is what Living Articles does. That is what Wrodium is for.

Case Study

—

May 7, 2026

Case Study: The 6-week freshness play that put Aparti in every AI answer.

Leanid Palhouski

Anna Naidis

Product explainer

—

May 5, 2026

Content Freshness Framework: How to Automate Web Content Updates Without Breaking Trust

Kaia Gao

Leanid Palhouski

Product explainer

—

May 5, 2026

Top 10 GEO Tools Compared: Pros, Cons, and When to Use in 2026

Kaia Gao

Leanid Palhouski